Are the World Champions Just Lucky?

By Matthew S. Wilson

“A good player is always lucky” – Capablanca

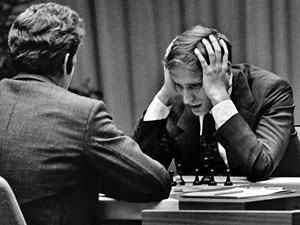

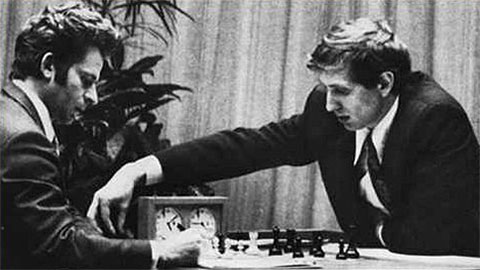

The year is 1972. Fischer has just crushed Spassky by 12.5–8.5. The way he qualified for the match is no less impressive: in 1970 he won the Interzonal with a staggering 18.5/23, 3.5 points ahead of his nearest rival, and in 1971 he defeated Larsen and Taimanov by 6–0. In the final Candidates match, former world champion Tigran Petrosian could only score 2.5–6.5 against him. There can be no doubt that Fischer is the strongest player in the world; how could such dominating results occur by chance?

Spassky vs Fischer ended in a 12.5–8.5 rout by the American

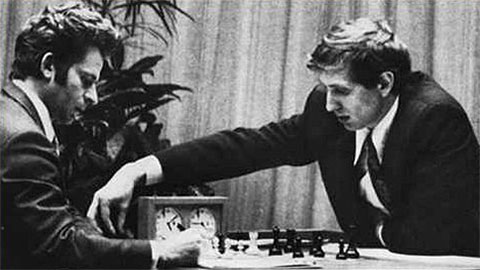

In 1935 Dutch GM Max Euwe (right) defeated World Champion Alexander Alekhine by 15.5–14.5

But not all world champions won so convincingly. Euwe barely beat Alekhine, winning by only 15.5–14.5, even though Alekhine had drinking problems and severely underestimated his challenger. Two years later, a reinvigorated Alekhine scored 15.5–9.5 in a return match. Was Euwe’s 1935 victory just luck? For a long time Euwe was probably the least respected world champion, until the FIDE knockout tournaments crowned players like Khalifman, Ponomariov, and Kasimdhzhanov. Subsequently the knockout tournaments were denounced as being little more than lotteries and FIDE overhauled the world championship cycle.

So how do we distinguish between “lucky” world champions like Khalifman (above) and “convincing” world champions like Fischer? How can we be confident that the winner of a match is really the best player in the world? Fortunately, there are statistical techniques to answer these questions.

Let’s start by analyzing Fischer’s historic 1972 victory over Spassky. The process has three steps:

-

Assume that each player is equally likely to win in every game and then run simulations of the match. Well, actually we need a bit more information, since a game could also be drawn. What draw rate should we assume? I analyzed all the world championship matches, and they seem to fall into three categories. In the “Pre-Capablanca” era, only 31% of world championship games were drawn. As chess understanding improved, errors became less frequent and the draw rate rose to 53% in the games from 1927–1963. Ever since 1966, the draw rate has been fairly stable at 66%. Fischer’s match belongs to the last category. Thus, for each game in the simulations, the computer will assume that there is a 66% chance of a draw, a 17% chance of a Fischer victory, and a 17% chance of a Spassky victory. Later we will test these assumptions. After simulating 40,000 matches, we move on to step 2.

-

Take the result that actually occurred and use the simulations to find out how likely it is. Fischer didn’t show up for game 2 and lost by forfeit. If we exclude this game, then he won the match 12.5–7.5. Returning to the simulations, we then measure the probability of winning by at least 12.5–7.5. This is done by counting all the simulations that ended in 12.5–7.5, 13–7, 12.5–6.5, and even more lopsided margins. These results occur in only 8.3% of the simulations – this number is called the p-value.

-

Use the result from step 2 to reevaluate the assumption in step 1. At the beginning we assumed that the players were equally skilled. But if this were true, then there is only an 8.3% chance of seeing a victory as dominating as 12.5–7.5. This is unlikely, so our assumption that the players are equal is probably wrong. Instead we conclude that Fischer is better.

Looking back at Fischer’s candidates matches, there is only a 0.16% chance of one player winning 6–0 against an equally skillful opponent, so Fischer’s demolitions of Taimanov and Larsen almost surely represent superiority rather than luck.

Now what about the other assumptions I made in order to run the simulations? Is the draw rate really constant at 66% throughout the match? It is easy to imagine that wins can change the draw rate. Maybe after winning, a player is energized and more confident, so the probability of winning rises while draws become less likely. Or perhaps the winner becomes complacent in the next game while the loser is motivated to take revenge. Decisive games can surely shift the players psychologically, but do they actually affect the outcome of the next game? To test this, I examined the results of games immediately following a win or a loss. In the modern era, the player who won the previous game has a 15% of winning, a 19% of losing, and a 66% of drawing the next game. This differs slightly from our assumption of 17%, 17%, and 66%, respectively. However, a simple statistical test reveals that the differences are not significant. I ran similar tests for 1927–1963 and pre-1927, and reached the same conclusion: there is not enough evidence to reject our assumptions.

So we can safely conclude that Fischer was better than Spassky. Should we also find Euwe’s win over Alekhine convincing? Once again, we start by assuming that Euwe and Alekhine are equal. In the match, Euwe prevailed by 15.5–14.5. How likely is such a victory? It turns out that if two equally skilled players play a match under the same conditions, a victory at least as good as Euwe’s will occur 88.3% of the time. Since similar victories occur so frequently, there is no reason to reverse our initial assumption that the players are equal. Euwe did not convincingly show superiority – his triumph could very well have been just luck. In the rematch in 1937, Alekhine prevailed by 15.5–9.5, and such a large margin of victory is seen in only 10.5% of the simulations if we assume that the players are equal. In other words, the p-value is 10.5%. Typically statisticians reject their initial assumption only if the p-value is less than 10%, but here the value is very close. In view of Alekhine’s big victory, it is unlikely that Euwe is his equal.

However, Euwe is hardly alone in scoring match victories that wouldn’t convince a statistician. Perhaps Capablanca really did deserve a return match after losing by 15.5–18.5 to Alekhine, since the p-value is 62.6%. We can’t reject the possibility that Alekhine and Capablanca were actually equal. Even Botvinnik’s 13–8 against Tal in 1961 fails to impress: the p-value, at 16.6%, is higher than 10%. Karpov’s +6, –2, =10 versus Korchnoi in 1981 is one of the few exceptions, having a p-value of 9.1%. Except for Anand’s 3.5–0.5 score against Shirov in 2000 (p-value = 2.9%!), the FIDE knockout tournament champions have been quite unpersuasive in their claim to the ultimate chess title. The best result, Ponomariov’s 4.5–2.5 versus Ivanchuk, still has quite a high p-value: 32.2%. So are the world champions just lucky?

First of all, we should be careful in interpreting our results. Note that a p-value of 16.6% for Botvinnik–Tal in 1961 does not prove that they are equally good players. Rather, it only means that we cannot reject the possibility that they are equal. So we haven’t proven that Botvinnik’s 13–8 is just luck. In addition, there are some factors that the statistical analysis can’t take into account. If one player consistently outplays his opponent, as chess players we can see that he is better even if the score in the match is close. But the statistics only look at the results and not at the quality of play. Most world champions build up the credibility of their match victories by also dominating elite tournaments – this is also ignored in the statistical analysis. For instance, none of Kasparov’s match victories over Karpov were statistically significant. We can reframe the question slightly: starting from 1985, what is the probability of winning 3½ matches out of four against Karpov? The p-value is slightly below 20%. This is still not statistically significant. But if we combine this information with Kasparov’s numerous tournament victories and his long reign as the #1 rated player (there are only two rating lists from 1984–2005 that do not have him at the top), then it is easy to persuade yourself that Kasparov truly was the best player in the world at his time.

– In part two of his article, to be published soon, Matthew Wilson does 40,000 match simulations to assess the chances of World Champion Viswanathan Anand and Challenger Magnus Carlsen in the November match –

About the author

|

Matthew Wilson is a PhD student studying Economics. He graduated from the University of Washington in 2010 with a B.S. in Economics and a B.A. in Math, and then earned an M.S. in Economics from the University of Oregon. He works as a teaching assistant at the University of Oregon, where he has taught applied statistics (econometrics) and economic theory. Currently his research focuses on macroeconomic theory and rational choice.

In chess, he used to be a promising junior, tying for first place in the Washington State Elementary Championships (grades 4-6). He frequently appeared in Washington's top ten and the USCF's top 100 for his age group. But now there is not as much time to study chess, and his rating peaked at 1952. |